Masters Research

During my Masters' course I was interested in novel topologies for permanent magnet motors. My general interest was the design and control of electrical machines and electro-magnetic phenomena for transportation (trains, ships, submarines). Please check the award winning ICEMS2009 poster and the paper.

Datasets:

To download this dataset, please visit the project webpage. The image on the left is a 2D TSNE projection of all the objects in the dataset, represented by their attribute vetctor.

To download this dataset, please visit the project webpage. The image on the left is a montage of 4 imgs from the dataset that include the attribute 'camping'.

Teaching:

Practical Machine Learning Tool (Leadership in Environmental and Digital innovation for Sustainability (LEADS) Summer School 2022)

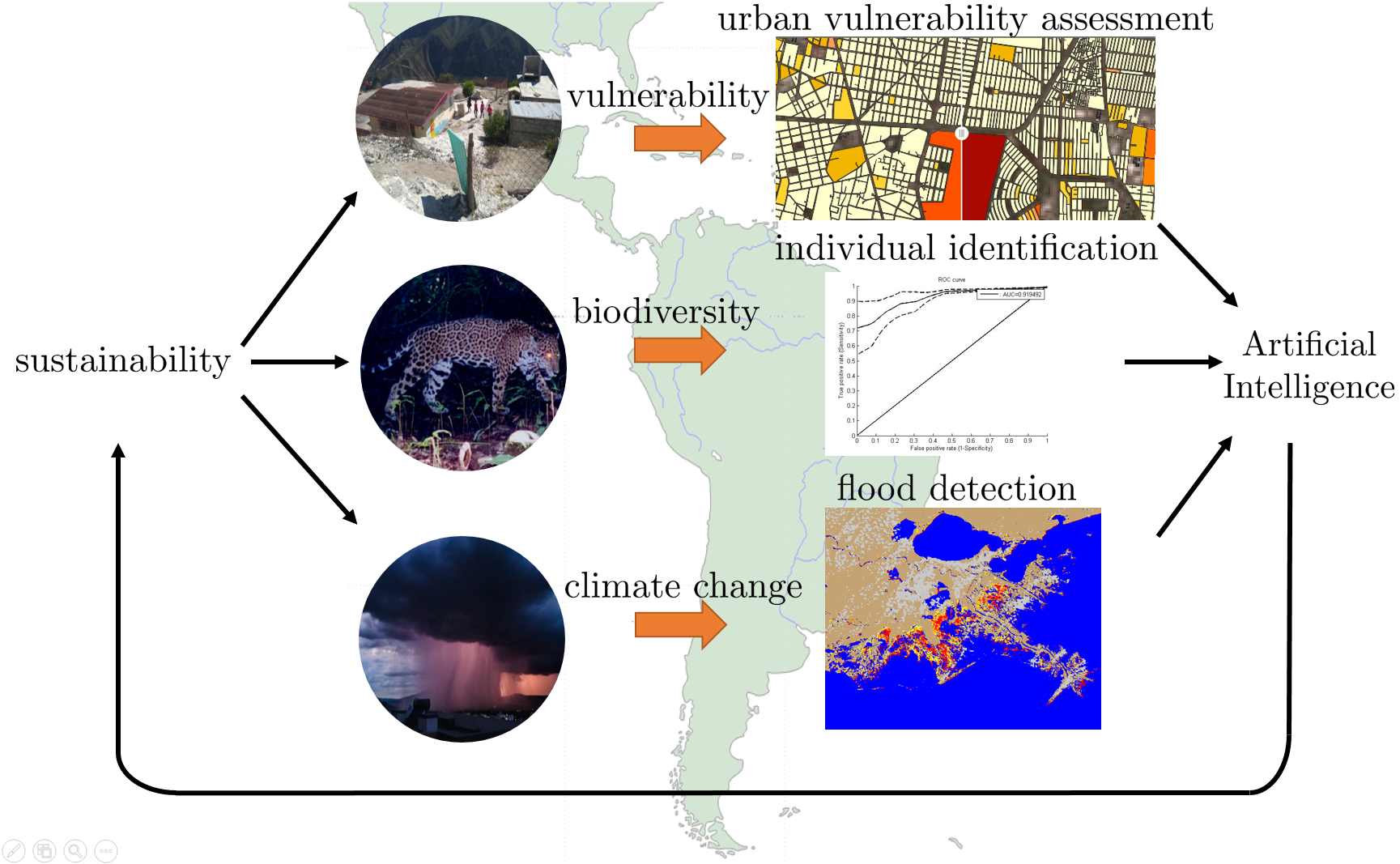

Learn ML to Impact Climate Change (Leadership in Environmental and Digital innovation for Sustainability (LEADS) Summer School 2021)

Deep Learning for Computer Vision: Tufts University Spring 2017

CSCI 2951T: Data-driven Computer Vision, Brown University

Workshops:

- Fall 2017 : COCO + Places 2017: Workshop for the COCO and Places challenges at ICCV 2017.

- Fall 2017 : GroupSight 2017: Second Workshop on Human Computation for Image and Video Analysis @ HCOMP 2017.

- Fall 2016 : ILSVRC + COCO 2016: Workshop for the COCO and ImageNet challenges at ECCV 2016.

- Fall 2016 : GroupSight 2016: Workshop on Human Computation for Image and Video Analysis @ HCOMP 2016.

- Fall 2015 : ILSVRC + COCO 2015: Workshop for the COCO and ImageNet challenges at ICCV 2015.